From Bias to Breach: Why Securing AI Requires More Than Just Fairness

Artificial Intelligence is no longer confined to futuristic headlines or lab demos. It’s here, embedded in the apps we use, the jobs we apply for, the content we consume, and the decisions made about us. From detecting fraud to recommending treatments, AI is transforming lives at scale.

But with this transformation comes a growing misconception: that bias is the biggest or only threat AI poses. Bias absolutely matters. An AI model trained on skewed data can reinforce discrimination in hiring, lending, policing, and beyond. We’ve seen the consequences of biased algorithms: real people sidelined, excluded, or misjudged. But fairness alone isn’t the finish line.

Because while we debate ethics, attackers are probing weaknesses. AI is increasingly becoming a target, not just of critique, but of exploitation.

Security Is the New Frontier of AI Risk

Recent events underscore the risks AI systems face. In February 2023, a Stanford student revealed how Microsoft’s Bing chatbot, codenamed “Sydney,” could be coerced into exposing its hidden system prompts through carefully crafted queries. This is a real-world case of prompt injection, showing that sophisticated models can still leak sensitive internal logic.

Around the same time, cybersecurity researchers at the University of Chicago released Nightshade (January 2024), a tool designed to poison images so malicious AI trainers cannot easily learn copyrighted material. Nightshade demonstrated that just a few hundred modified images can significantly disrupt a model’s behavior, a clear example of how data poisoning can be weaponized.

These are not hypothetical threats. They are current, documented, and escalating. Any AI model that ingests user data or learns from external sources is potentially vulnerable.

These are not theoretical threats. They’re here. And the risk isn’t limited to big tech companies. Any startup deploying an LLM, a recommendation engine, or a smart assistant is potentially opening a new front for cyberattacks often unknowingly.

Four Threats AI Builders Can’t Ignore

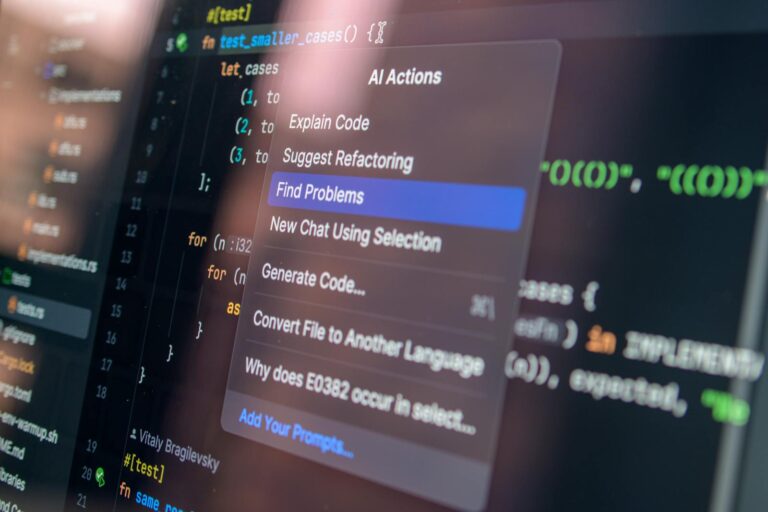

• Prompt Injection: Malicious inputs crafted to bypass content filters and safety restrictions in AI models

• Model Inversion: Attackers reconstruct sensitive data used to train a model, such as health records or customer profiles

• Data Poisoning: Corrupting training data with subtle manipulations that cause the AI to behave dangerously or incorrectly

• Output Manipulation: Coaxing AI into generating skewed or misleading outputs that serve misinformation campaigns or competitive sabotage

If your AI model accepts user input, it’s a potential attack surface, just like a login page or API endpoint.

Why Startups Should Think Beyond Accuracy and Bias

Startups often move fast, guided by a lean roadmap and limited resources. But the push to build fast can lead to critical blind spots, particularly around AI security.

Yes, your model might be fair, transparent, and performant. But can it be trusted under attack?

• Are your AI APIs rate-limited and monitored for abuse?

• Can your model be used to extract sensitive training data?

• Has your data pipeline been secured from ingestion to deployment?

• What happens if your chatbot is weaponized to spread falsehoods?

These questions must be asked early, not after an incident. And they shouldn’t just concern developers:

• Investors should evaluate AI security readiness as part of due diligence

• Regulators (e.g., under the EU AI Act) are beginning to require proof of safety, not just fairness

• End users increasingly expect transparency on how AI systems protect their data and avoid misuse

The Business Case for AI Security

Security is not just a technical issue, it’s a business imperative.

• Brand Reputation: A single data leak or model abuse incident can destroy trust overnight

• Customer Loyalty: Users are savvier than ever about privacy. Secure systems attract and retain them

• Intellectual Property Protection: Model weights and architecture are your company’s IP. Treat them like source code

• Regulatory Compliance: Meeting security standards early gives startups an edge as regulations tighten

Secure the Whole Lifecycle

It’s not about perfection. It’s about readiness. Because a chain is only as strong as its weakest link, and a compromised dataset can doom a model before it’s even deployed:

• Role-based access to datasets

• Version control for models

• Integrity verification on third-party data

Think Like an Attacker

Because stress-testing is the only way to prepare for real threats:

• Conduct adversarial red teaming and simulations

• Simulate prompt injections and output manipulation

• Include AI-specific threat modeling: map assets, threats, and controls

Monitor and Defend APIs

Because your API is a direct channel for attackers to probe, abuse, and steal your valuable model:

• Implement rate limiting

• Log and monitor all API usage

• Encrypt model weights and responses

Apply Zero Trust, Even Internally

Because internal misuse is often harder to detect:

• Authenticate and authorize every model request

• Use least-privilege principles for internal tools

• Automate credential rotation and monitor access anomalies

Prepare for the Worst

Because when your model goes rogue or gets breached, timing matters:

• Add AI-specific response flows in your Incident Response Plan

• Practice recovery drills involving model rollback or shutdown

• Assign responsibility for AI security across technical and ops teams

Fairness ? Security

As regulations push for more ethical, explainable, and fair AI, security must be part of the same conversation. A fair algorithm that leaks personal data or can be hijacked with a clever prompt is still a dangerous system.

AI security is not just about building responsibly, it’s about defending continuously. The conversation on AI has been dominated by ethics. Now, it must be dominated by defense. It’s time for every AI builder to shift their mindset from builder to guardian.

Written by Johnson Okoli